Overview

This agent performs automated root cause analysis on ATE (Automated Test Equipment) board validation failure logs. It cross-references multi-site test logs against a debug reference guide to decode error codes, trace fault propagation across test phases, and identify whether failures are board defects or tester artifacts—work that would otherwise require a hardware validation engineer to manually correlate hundreds of timestamped events across power, memory, PCIe, and signal integrity subsystems. Powered by the Device Log Analysis template in Agent Composer—available for Enterprise customers; request a demo for access.Try the Demo

Launch Demo

Analyze board validation logs yourself

Running on the production DLA template. Demo queries are cached and sped up for demonstration purposes.

The Problem

When a board validation session fails, engineers must determine whether the fault lies with the device under test (DUT), the tester hardware, or a transient environmental condition. ATE logs contain:- Per-site parallel test events across multiple DUT sockets

- Power rail telemetry (VDDQ, VCCIN, VCCIO, VPP, VCCSA) sampled throughout each test phase

- DDR5 training sequences with per-phase margin measurements

- PCIe link training LTSSM states and equalization results

- Signal integrity eye measurements, insertion loss, jitter, and skew

- Voltage regulator diagnostics including temperature, efficiency, and ripple

- Tester HAL artifacts (e.g. multi-site inrush coupling transients) that must be distinguished from real faults

How It Works

In production

What you do: Upload an ATE failure log and optionally a debug reference guide, then ask a query—for example “Why did SITE:2 fail?”. What happens automatically: A multi-agent implementation takes over. The system parses the log, builds a searchable database from the log and reference files, runs root cause analysis across all test phases and sites, and produces outputs. Pipeline stages: Uploading files → parsing logs → building the database → root cause analysis → generating the report. Outputs: The agent delivers a detailed RCA report covering executive summary, per-site timeline, decoded errors, voltage/thermal trajectory, and prioritized recommended actions. It can also generate visualizations such as VDDQ voltage sag charts and multi-site comparison tables. Auditable: Every step is visible—tasks, trajectory, intermediate artifacts, and the final report—so validation teams can review the analysis and act on findings.In this demo

Sample ATE files are preloaded; choose a suggested query and run the analysis.Example Questions

Suggested queries in the demo:- “Why did SITE:2 fail?”

- “When did this problem first appear?”

- “Is this a device failure or a tester failure?”

Output

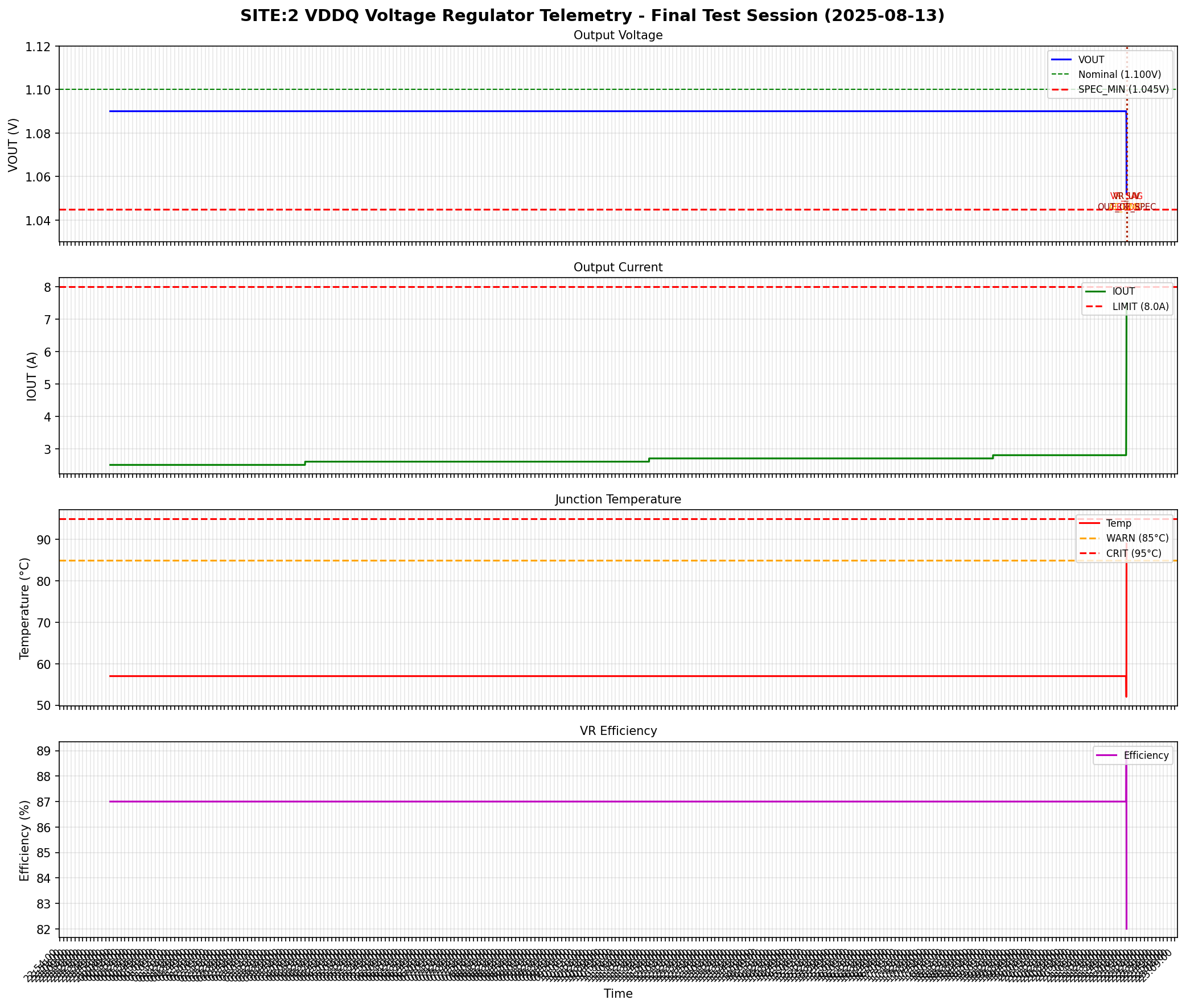

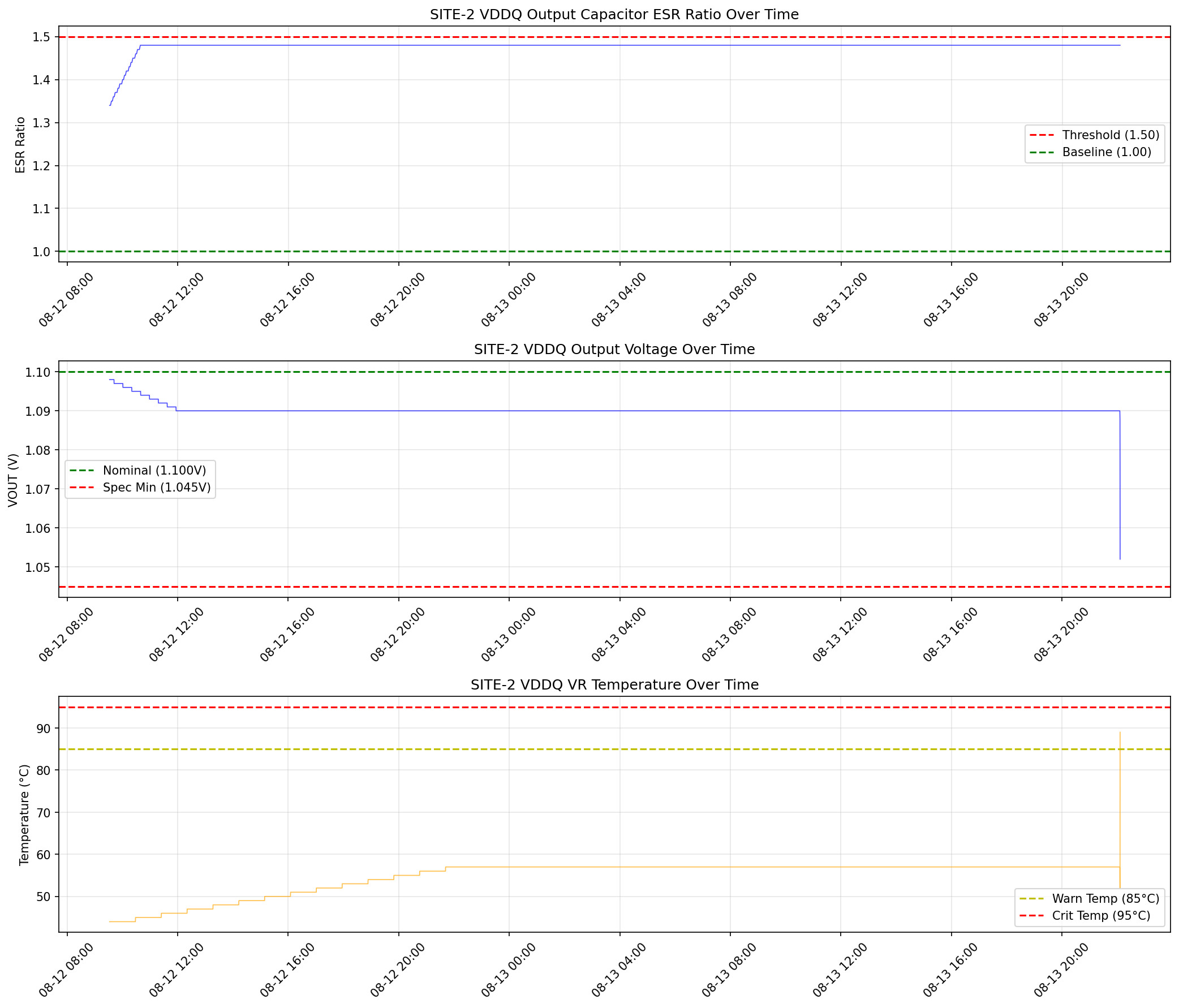

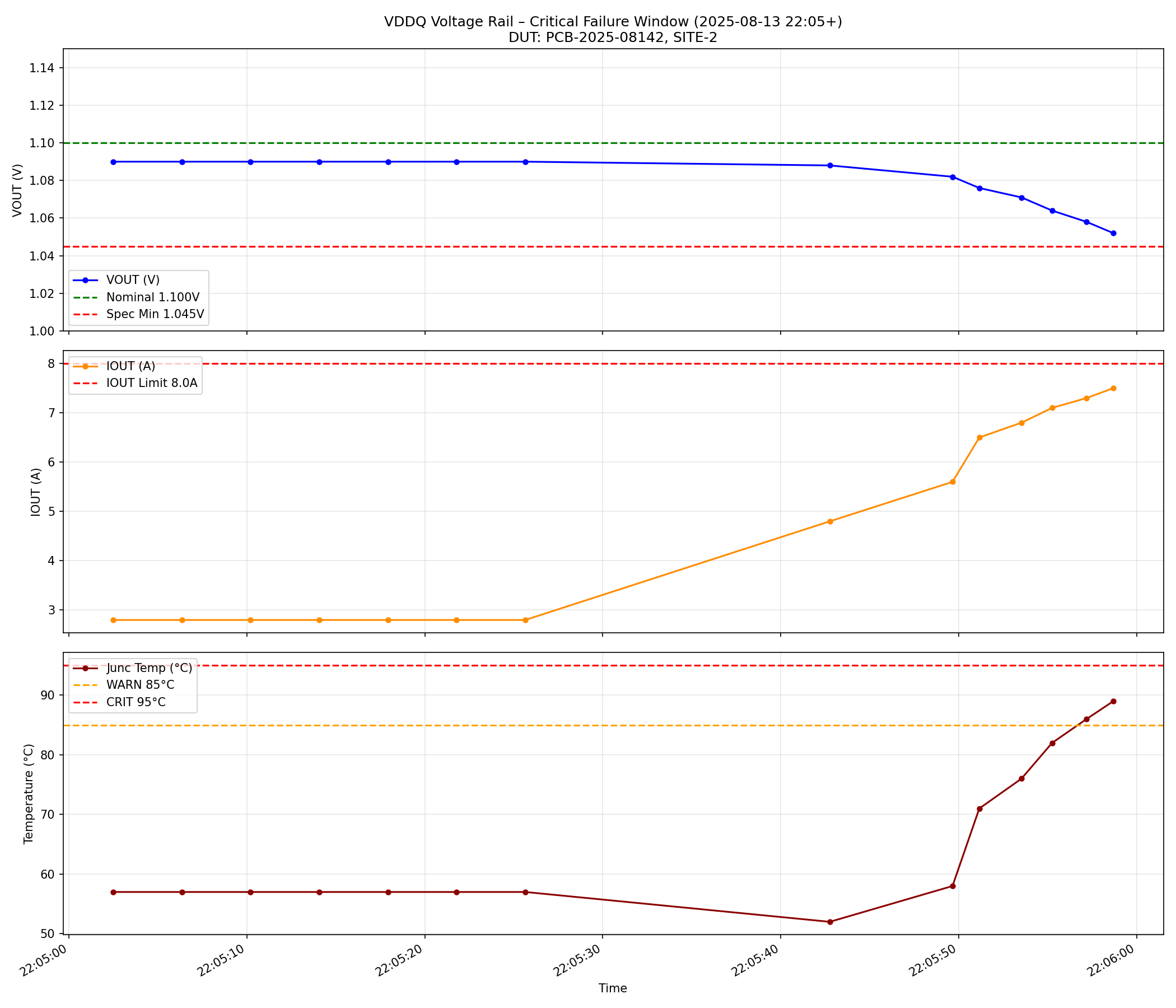

Example outputs from the three suggested queries: “Why did SITE:2 fail?” — VDDQ voltage regulator telemetry for the final test session: output voltage sagging to the spec minimum, output current climbing toward the limit, junction temperature spiking above the warning threshold, and efficiency degrading — all four panels confirm a single failing component:

- Executive summary — Which site(s) failed, root cause (e.g. VDDQ voltage regulator degradation), and yield impact

- Fault timeline — Chronological sequence showing voltage sag progression, training retry escalation, and cascade into secondary failures

- Per-site comparison — Side-by-side pass/fail status and key metrics (VDDQ voltage, VR temperature, DDR5 eye width) across all 4 test sites

- Root cause chain — Causal trace from the originating hardware fault through each downstream failure (e.g. VDDQ sag → DDR5 training fail → PCIe Gen fallback → bin FAIL)

- Tester artifact analysis — Identifies HAL transient events (e.g.

HAL_VDDQ_COUPLE_0x0B) and correctly classifies them as non-causal measurement artifacts rather than real faults - Recommended actions — Prioritized table with action and rationale (e.g. replace VDDQ output capacitor on failed DUT, retest on same tester to confirm tester health)

ATE sample files in the demo

- Device log (

ate_device_log_30mb.txt) — A timestamped multi-site board validation log with entries from subsystems including ATE-SYS, ATE-HAL, ATE-PWR, ATE-FPGA, DUT-VR, DUT-THERM, and per-site test events (SITE-1 through SITE-4). Each line includes severity (INFO/WARN/ERROR), subsystem tags, and synthetic diagnostic codes. - Debug reference guide (

ate_debug_rules.txt) — An ATE board validation debug reference covering power rail specifications, DDR5 training sequence codes, PCIe link training codes, voltage regulator diagnostic codes, signal integrity measurement codes, test bin definitions, HAL artifact classifications, and common root cause patterns.

Learn More

- Device Log Analysis (overview) — General DLA template and all three sample datasets

- 3GPP Wireless — LTE-style eNB/device logs

- Mazda Infotainment — Infotainment crash logs

- Getting Started with the Contextual AI Platform

- Agent Composer Templates — Basic Search, Agentic Search, and Device Log Analysis (Enterprise)

- Request a demo